Fully Automated AI/ML Infrastructure to build, train and deploy AI models 10x faster

With 80% less cost as compared to AWS Sagemaker

What if you could set up your AI/ML infrastructure and pipelines in a few hours, and reduce your total cost of ownership by 80%?

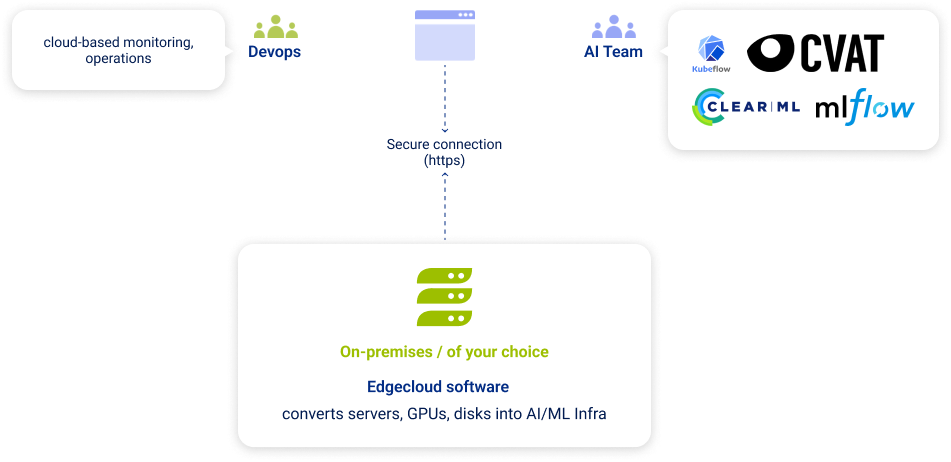

Edgebricks makes it happen by providing a fully automated software platform that

converts servers, GPUs and disks into AI/ML infrastructure.

AI developers

We provide a CloudConsole where they can train,

develop, and deploy AI models in one place.

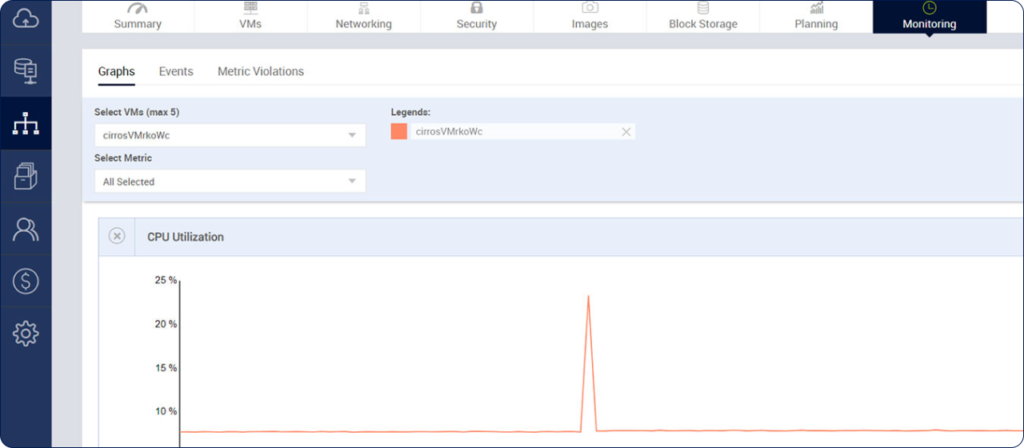

DevOps teams

We provide cloud-based monitoring, control, MLOps with security and compliance that you need.

CloudConsole

Self-service consumption for AI Developers

Empowering AI teams to deliver

AI/ML applications faster

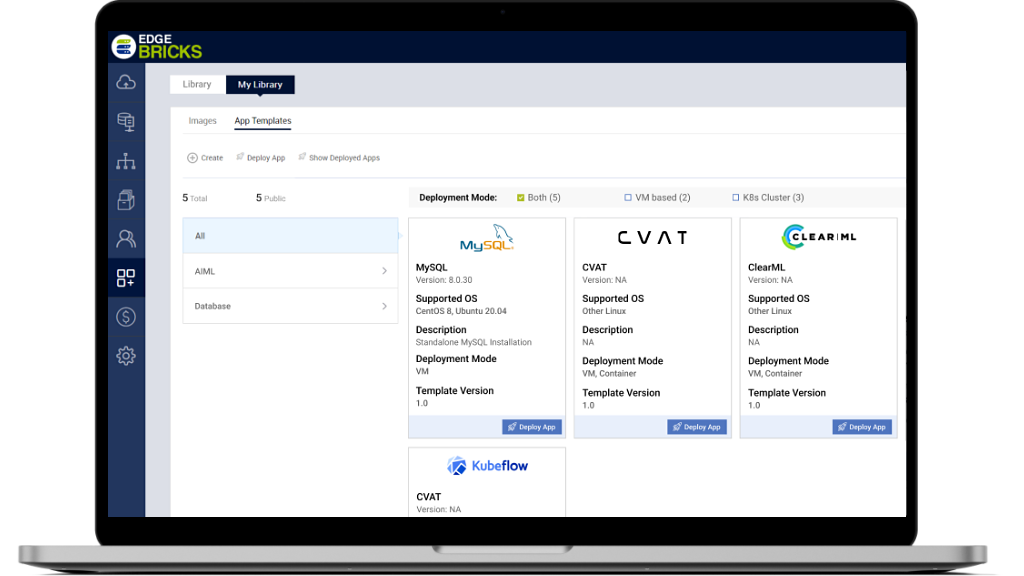

If you are looking for a simple way to create a scalable machine learning solution using open source tools, Edgebricks can help you achieve your goals. Using the built-in app library, you team can quickly deploy different sets of tools needed to build, test, launch AI models into production. AI developers get a simple cloud console to access these tools and don’t have to worry about any infrastructure details.

Built in App Store with Best

of Breed AI/ML Apps

There is a built in App store with dozens of AI/ML tools listed in there. We have automated the deployment and stitching together of these tools to build various workflows and pipelines as needed. These include automation of object detection, data labeling, NLP, recommendation systems and others.

Built-in Object store and NFS volumes for

Data Sharing

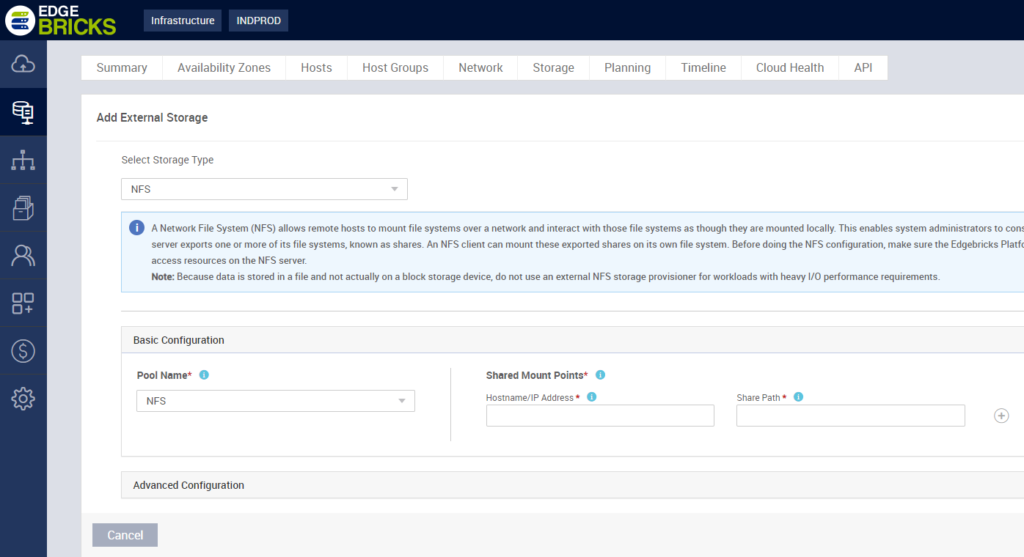

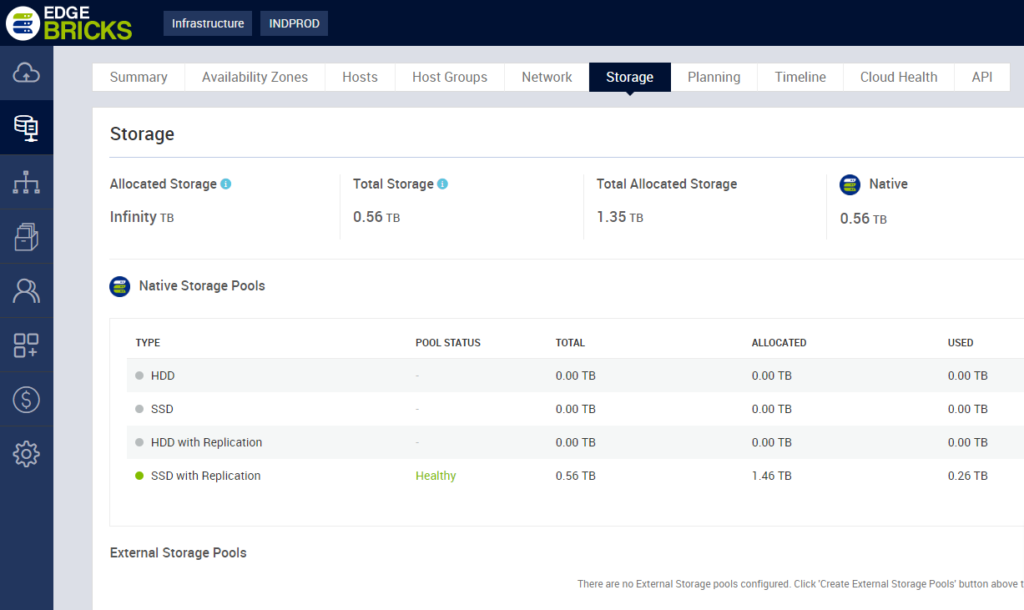

Edgebricks has built-in object store to handle your images, videos and other data close to the compute. This data can be stored either in an object store of NFS servers for easy sharing across various stages of a pipeline. This significantly lowers your data storage cost and speed up model training with faster access to data.

Leverage Public Cloud Resources or Local Storage If Needed

Edgebricks helps to integrate with public cloud and local storage so that you don’t have to worry about scaling resources across multiple environments or having different teams manage different architectures.